|

|

||

|---|---|---|

| .. | ||

| IAM.md | ||

| README.md | ||

| demo | ||

| diagram.png | ||

| diagram_vpcsc.png | ||

| main.tf | ||

| outputs.tf | ||

| variables.tf | ||

README.md

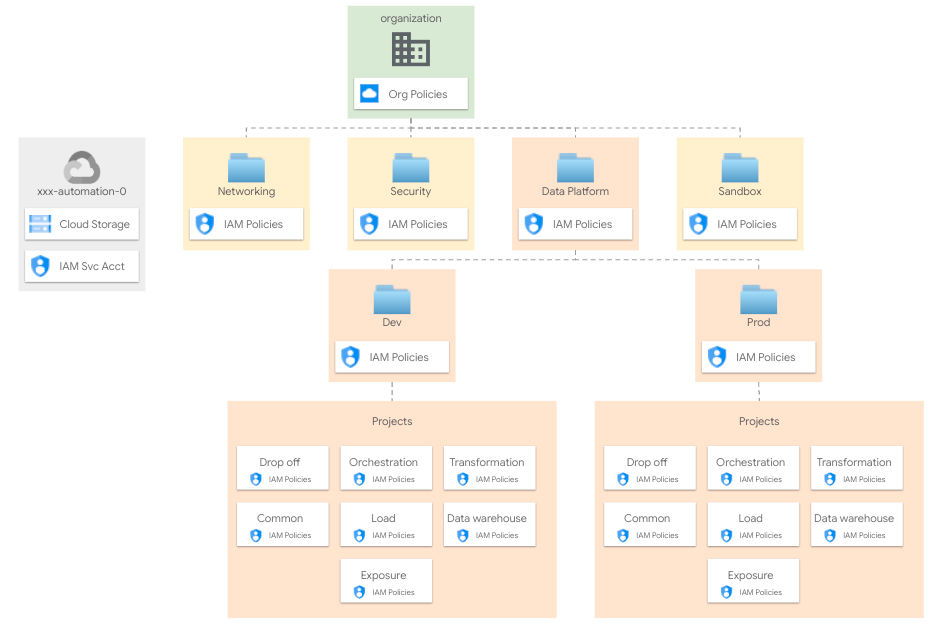

Data Platform

The Data Platform builds on top of your foundations to create and set up projects (and related resources) to be used for your data platform.

Design overview and choices

A more comprehensive description of the Data Platform architecture and approach can be found in the Data Platform module README. The module is wrapped and configured here to leverage the FAST flow.

The Data Platform creates projects in a well-defined context, usually an ad-hoc folder managed by the resource management setup. Resources are organized by environment within this folder.

Across different data layers environment-specific projects are created to separate resources and IAM roles.

The Data Platform manages:

- project creation

- API/Services enablement

- service accounts creation

- IAM role assignment for groups and service accounts

- KMS keys roles assignment

- Shared VPC attachment and subnet IAM binding

- project-level organization policy definitions

- billing setup (billing account attachment and budget configuration)

- data-related resources in the managed projects

User groups

As per our GCP best practices the Data Platform relies on user groups to assign roles to human identities. These are the specific groups used by the Data Platform and their access patterns, from the module documentation:

- Data Engineers They handle and run the Data Hub, with read access to all resources in order to troubleshoot possible issues with pipelines. This team can also impersonate any service account.

- Data Analysts. They perform analysis on datasets, with read access to the data warehouse Curated or Confidential projects depending on their privileges, and BigQuery READ/WRITE access to the playground project.

- Data Security:. They handle security configurations related to the Data Hub. This team has admin access to the common project to configure Cloud DLP templates or Data Catalog policy tags.

| Group | Landing | Load | Transformation | Data Warehouse Landing | Data Warehouse Curated | Data Warehouse Confidential | Data Warehouse Playground | Orchestration | Common |

|---|---|---|---|---|---|---|---|---|---|

| Data Engineers | ADMIN |

ADMIN |

ADMIN |

ADMIN |

ADMIN |

ADMIN |

ADMIN |

ADMIN |

ADMIN |

| Data Analysts | - | - | - | - | - | READ |

READ/WRITE |

- | - |

| Data Security | - | - | - | - | - | - | - | - | ADMIN |

Network

A Shared VPC is used here, either from one of the FAST networking stages (e.g. hub and spoke via VPN) or from an external source.

Encryption

Cloud KMS crypto keys can be configured wither from the FAST security stage or from an external source. This step is optional and depends on customer policies and security best practices.

To configure the use of Cloud KMS on resources, you have to specify the key id on the service_encryption_keys variable. Key locations should match resource locations.

Data Catalog

Data Catalog helps you to document your data entry at scale. Data Catalog relies on tags and tag template to manage metadata for all data entries in a unified and centralized service. To implement column-level security on BigQuery, we suggest to use Tags and Tag templates.

The default configuration will implement 3 tags:

3_Confidential: policy tag for columns that include very sensitive information, such as credit card numbers.2_Private: policy tag for columns that include sensitive personal identifiable information (PII) information, such as a person's first name.1_Sensitive: policy tag for columns that include data that cannot be made public, such as the credit limit.

Anything that is not tagged is available to all users who have access to the data warehouse.

You can configure your tags and roles associated by configuring the data_catalog_tags variable. We suggest useing the "Best practices for using policy tags in BigQuery" article as a guide to designing your tags structure and access pattern. By default, no groups has access to tagged data.

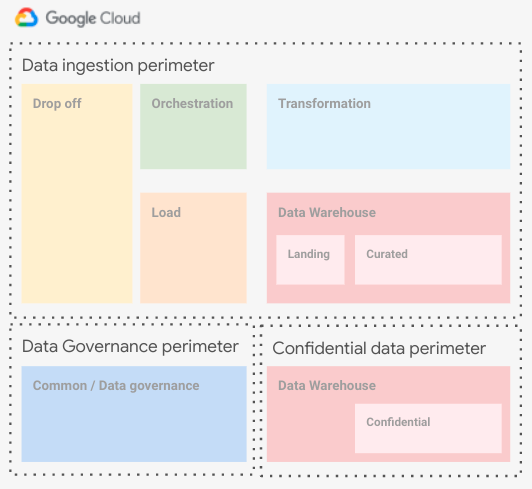

VPC-SC

As is often the case in real-world configurations, VPC-SC is needed to mitigate data exfiltration. VPC-SC can be configured from the FAST security stage. This step is optional, but highly recomended, and depends on customer policies and security best practices.

To configure the use of VPC-SC on the data platform, you have to specify the data platform project numbers on the vpc_sc_perimeter_projects.dev variable on FAST security stage.

In the case your Data Warehouse need to handle confidential data and you have the requirement to separate them deeply from other data and IAM is not enough, the suggested configuration is to keep the confidential project in a separate VPC-SC perimeter with the adequate ingress/egress rules needed for the load and tranformation service account. Below you can find an high level diagram describing the configuration.

How to run this stage

This stage can be run in isolation by prviding the necessary variables, but it's really meant to be used as part of the FAST flow after the "foundational stages" (00-bootstrap, 01-resman, 02-networking and 02-security).

When running in isolation, the following roles are needed on the principal used to apply Terraform:

- on the organization or network folder level

roles/xpnAdminor a custom role which includes the following permissions"compute.organizations.enableXpnResource","compute.organizations.disableXpnResource","compute.subnetworks.setIamPolicy",

- on each folder where projects are created

"roles/logging.admin""roles/owner""roles/resourcemanager.folderAdmin""roles/resourcemanager.projectCreator"

- on the host project for the Shared VPC

"roles/browser""roles/compute.viewer"

- on the organization or billing account

roles/billing.admin

The VPC host project, VPC and subnets should already exist.

Providers configuration

If you're running this on top of Fast, you should run the following commands to create the providers file, and populate the required variables from the previous stage.

# Variable `outputs_location` is set to `~/fast-config` in stage 01-resman

ln -s ~/fast-config/providers/03-data-platform-dev-providers.tf .

If you have not configured outputs_location in bootstrap, you can derive the providers file from that stage's outputs:

cd ../../01-resman

terraform output -json providers | jq -r '.["03-data-platform-dev"]' \

> ../03-data-platform/dev/providers.tf

Variable configuration

There are two broad sets of variables that can be configured:

- variables shared by other stages (organization id, billing account id, etc.) or derived from a resource managed by a different stage (folder id, automation project id, etc.)

- variables specific to resources managed by this stage

To avoid the tedious job of filling in the first group of variables with values derived from other stages' outputs, the same mechanism used above for the provider configuration can be used to leverage pre-configured .tfvars files.

If you configured a valid path for outputs_location in the bootstrap security and networking stages, simply link the relevant terraform-*.auto.tfvars.json files from this stage's outputs folder under the path you specified. This will also link the providers configuration file:

# Variable `outputs_location` is set to `~/fast-config`

ln -s ~/fast-config/tfvars/00-bootstrap.auto.tfvars.json .

ln -s ~/fast-config/tfvars/01-resman.auto.tfvars.json .

ln -s ~/fast-config/tfvars/02-networking.auto.tfvars.json .

# also copy the tfvars file used for the bootstrap stage

cp ../../00-bootstrap/terraform.tfvars .

If you're not using FAST or its output files, refer to the Variables table at the bottom of this document for a full list of variables, their origin (e.g., a stage or specific to this one), and descriptions explaining their meaning.

Once the configuration is complete you can apply this stage:

terraform init

terraform apply

Demo pipeline

The application layer is out of scope of this script. As a demo purpuse only, several Cloud Composer DAGs are provided. Demos will import data from the landing area to the DataWarehouse Confidential dataset suing different features.

You can find examples in the [demo](../../../../blueprints/data-solutions/data-platform-foundations/demo) folder.

Files

| name | description | modules | resources |

|---|---|---|---|

| main.tf | Data Platform. | data-platform-foundations |

|

| outputs.tf | Output variables. | google_storage_bucket_object · local_file |

|

| variables.tf | Terraform Variables. |

Variables

| name | description | type | required | default | producer |

|---|---|---|---|---|---|

| automation | Automation resources created by the bootstrap stage. | object({…}) |

✓ | 00-bootstrap |

|

| billing_account | Billing account id and organization id ('nnnnnnnn' or null). | object({…}) |

✓ | 00-globals |

|

| folder_ids | Folder to be used for the networking resources in folders/nnnn format. | object({…}) |

✓ | 01-resman |

|

| host_project_ids | Shared VPC project ids. | object({…}) |

✓ | 02-networking |

|

| organization | Organization details. | object({…}) |

✓ | 00-globals |

|

| prefix | Unique prefix used for resource names. Not used for projects if 'project_create' is null. | string |

✓ | 00-globals |

|

| composer_config | object({…}) |

{…} |

|||

| data_catalog_tags | List of Data Catalog Policy tags to be created with optional IAM binging configuration in {tag => {ROLE => [MEMBERS]}} format. | map(map(list(string))) |

{…} |

||

| data_force_destroy | Flag to set 'force_destroy' on data services like BigQery or Cloud Storage. | bool |

false |

||

| groups | Groups. | map(string) |

{…} |

||

| location | Location used for multi-regional resources. | string |

"eu" |

||

| network_config_composer | Network configurations to use for Composer. | object({…}) |

{…} |

||

| outputs_location | Path where providers, tfvars files, and lists for the following stages are written. Leave empty to disable. | string |

null |

||

| project_services | List of core services enabled on all projects. | list(string) |

[…] |

||

| region | Region used for regional resources. | string |

"europe-west1" |

||

| service_encryption_keys | Cloud KMS to use to encrypt different services. Key location should match service region. | object({…}) |

null |

||

| subnet_self_links | Shared VPC subnet self links. | object({…}) |

null |

02-networking |

|

| vpc_self_links | Shared VPC self links. | object({…}) |

null |

02-networking |

Outputs

| name | description | sensitive | consumers |

|---|---|---|---|

| bigquery_datasets | BigQuery datasets. | ||

| demo_commands | Demo commands. | ||

| gcs_buckets | GCS buckets. | ||

| kms_keys | Cloud MKS keys. | ||

| projects | GCP Projects informations. | ||

| vpc_network | VPC network. | ||

| vpc_subnet | VPC subnetworks. |