2.6 KiB

Scheduled Cloud Asset Inventory Export to Bigquery

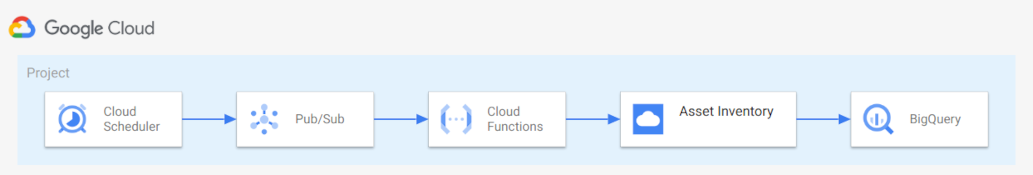

This example shows how to leverage Cloud Asset Inventory Exporting to Bigquery feature to keep track of your organization wide assets over time storing information in Bigquery.

The data stored in Bigquery can then be used for different purposes:

- dashboarding

- analysis

This example shows an export to Bigquery scheduled on a daily basis.

The resources created in this example are shown in the high level diagram below:

Prerequisites

Ensure that you grant your account one of the following roles on your project, folder, or organization.

- Cloud Asset Viewer role (roles/cloudasset.viewer)

- Owner primitive role (roles/owner)

Running the example

Clone this repository, specify your variables in a terraform.tvars and then go through the following steps to create resources:

terraform initterraform apply

Once done testing, you can clean up resources by running terraform destroy. To persist state, check out the backend.tf.sample file.

Testing the example

You can now run queries on the data you exported on Bigquery. Here you can find some example of queries you can run.

You can also create a dashborad connecting Datalab or any other BI tools of your choice to your Bigquery datase..

Variables

| name | description | type | required | default |

|---|---|---|---|---|

| cai_config | Cloud Asset inventory export config. | object({...}) |

✓ | |

| project_id | Project id that references existing project. | string |

✓ | |

| bundle_path | Path used to write the intermediate Cloud Function code bundle. | string |

./bundle.zip |

|

| name | Arbitrary string used to name created resources. | string |

asset-inventory |

|

| project_create | Create project instead ofusing an existing one. | bool |

false |

|

| region | Compute region used in the example. | string |

europe-west1 |

Outputs

| name | description | sensitive |

|---|---|---|

| bq-dataset | Bigquery instance details. | |

| cloud-function | Bigquery instance details. |